Source code

Features

The recruitment process

Those famously unbiased, next‑gen XDR/NGAV solutions that never miss a thing

LLVM-EX howto

A kernel‑level network connection hiding malware

A great invasion of your privacy

Data breach

A properly implemented kernel‑mode anti‑cheat system

HEX DEREF ANTI-MALWARE X: Zero-Trust mode at the application/process level

A previously unknown malware...

Detection‑response based security does not necessarily recognize (and therefore cannot protect against) unknown threats, including new malware. This limitation applies to virtually all vendors listed on VirusTotal.com as well as the majority of enterprise‑grade security providers, since they rely primarily on signature‑ or pattern‑based detection. Behavior‑based detection can easily generate false positives, because the same actions can be used for both legitimate and malicious purposes. With only a few exceptions, based on the author's earlier testing with unpublished malware sample — unknown threats are typically discovered and addressed by these legacy detection‑based endpoint security solutions only after they have already caused damage to their customers.

An anti‑virus solution or malware can map out most of a company's internal network simply by examining the process list and their network connections — all without the user or the organization noticing. And when you think about unauthorized actions performed by an endpoint protection solution, this would definitely qualify — yet at the same time it's also part of the core functionality these systems rely on.

Think about home users who work remotely and read their email with something like Thunderbird, which is a popular free email client. If they rely solely on Defender, it will not even react when a running piece of malware maps out the company's connections and steals their credentials in the process. As a result, a corporate email compromise can occur without the user ever noticing.

Malware built for industrial espionage enumerates the network connections of active processes, including things like payroll systems. After it gains kernel access, the keylogger activates, and you can imagine the outcome.

A piece of malware running under standard user rights can inspect the process list to determine whether any process exposes a potential CVE‑????‑????‑type vulnerability that could be leveraged for privilege escalation.

You might be very mistaken when you say that even Defender is enough. It's not that straightforward once you understand what's happening under the hood and grasp the full concept.

Some of the most dangerous unknown threats are code-signed malware or ransomware that exploit previously undiscovered zero‑day vulnerabilities. Modern offensive AI models can now identify exploitable bugs far faster than any human researcher. This represents an entirely new level of capability in both cyber offense and defense.

At some point the malware has to report back to its operator that it has spread to a new device, and that C2-communication is the most critical detection vector for malware.

This is precisely where the kernel-level allow‑listing becomes critical: new and unknown threats simply do not execute in the first place. As a result, hunting for new threats becomes dramatically simpler and faster, because the only things that need to be examined are programs that have never been used within that organization before.

This is exactly why HEX DEREF ANTI‑MALWARE X includes a fully functional kernel‑level Authenticode verification mechanism: unknown or unsigned code simply never executes, not even for a fraction of a second, unless it is explicitly on the allowed list. The solution runs alongside every endpoint security solution and kernel‑level anti‑cheat. Malware doesn't know that this is an analysis tool.

A great invasion of your privacy- Browsing history including Tor-browser

- Process list

- Processes command‑line data and network connections

- Internal network URLs may also end up being sent, since it may not be able to tell internal addresses apart from external ones

- File history

What data do endpoint protection solutions collect (e.g., those listed on VirusTotal.com)? Browsing history is usually sent without an opt‑out option. File names, sizes, and their hashes (SHA‑256), because without this information traditional anti-virus products cannot determine whether an executable (or some other file) on the device is malicious. Nothing on a user's device should be transmitted to a vendor's servers without explicitly asking the user for permission, otherwise the endpoint security product itself is performing unauthorized actions — effectively a data breach. At this point we are approaching GDPR violations, and this could even lead to the revocation of the EV code‑signing certificate. None of the above can be disabled through any ant-ivirus solution's settings.

OV code‑signing

EV code‑signing

Usually files are named according to what they relate to. For example Supplier_shipments_03-2025.xlsx or Packaging_equipment_project_xyz.xlsx. It all depends on the company's naming standard. When a traditional anti-virus solution performs a "Scan all files" operation (IoC), it could, in principle, enable industrial espionage without the user's awareness, based solely on file names.

To my understanding, no endpoint protection product or kernel-level anti-cheat mechanism is permitted to transmit any files from a device without first obtaining explicit user consent; doing so would be functionally comparable to an information-stealing malware. In many products, automatic sample submission is enabled by default.

As a result, personal data may be sent from your device. At some point you have to trust something. The test malware mentioned in the article does not send anything out from the device — and even if it did, the data it sends is not used for any malicious purpose. There are no guarantees about how the data is used or who can access it, despite the polished privacy statements. And if we consider features like protected folders, that alone would already point to a very clear place to start…

Data breachA single breach can compromise weapons development, military readiness, or national security — and in many cases, one data breach is enough to expose a company's most critical trade secrets. For a software company, that means the source code; for a pharmaceutical company, it means proprietary formulas and manufacturing processes; and for other industries, it may be the core intellectual property that defines their entire competitive advantage.

Let's start by signing you in and bringing over your passwords, browsing history and more from Microsoft Cloud. If this data cannot be accessed for one reason or another, then in practice the instance or the entire company is unable to operate — even payroll would become impossible to process.

A data breach already occurs at least partially the moment your sensitive data is transferred into a third‑party cloud service. All of that data should be encrypted or otherwise protected before being uploaded to any of these services.

What puzzled the author even more was that in none of the data‑breach investigation reports or ransomware incident analyses was there ever any mention of what cybersecurity solution the affected organization had been using — or whether they had any at all besides Defender.

One of the services (CIR) charged a 5,000 USD upfront fee and then 300 USD per hour. That's far from inexpensive, especially when the site provides no description of the service. And a reinstallation won't do much if the attacker gained access through a zero‑day vulnerability. In any case, breaches like these shouldn't be happening very often — if at all — at the device level when using a solution like HEX DEREF ANTI‑MALWARE X, assuming it's operating in a zero‑trust mode.

If the root cause was a zero‑day vulnerability, the attacker is likely to return unless the ransom was paid. The author even contacted several of these CIR providers and quickly realized that many of them are essentially cash‑grabs or outright scams.

Determining the root cause of a data breach isn't quite that straightforward. Malware may be deployed through remote access using credentials exposed in a breach, insider-launched malware, an undisclosed 0-day vulnerability, or triggered accidentally by an employee without malicious intent. If you rule out all other possibilities, the only thing left is the data collected through the core functionality of the endpoint protection itself — data that can ultimately be used against the very organization it was originally meant to protect.

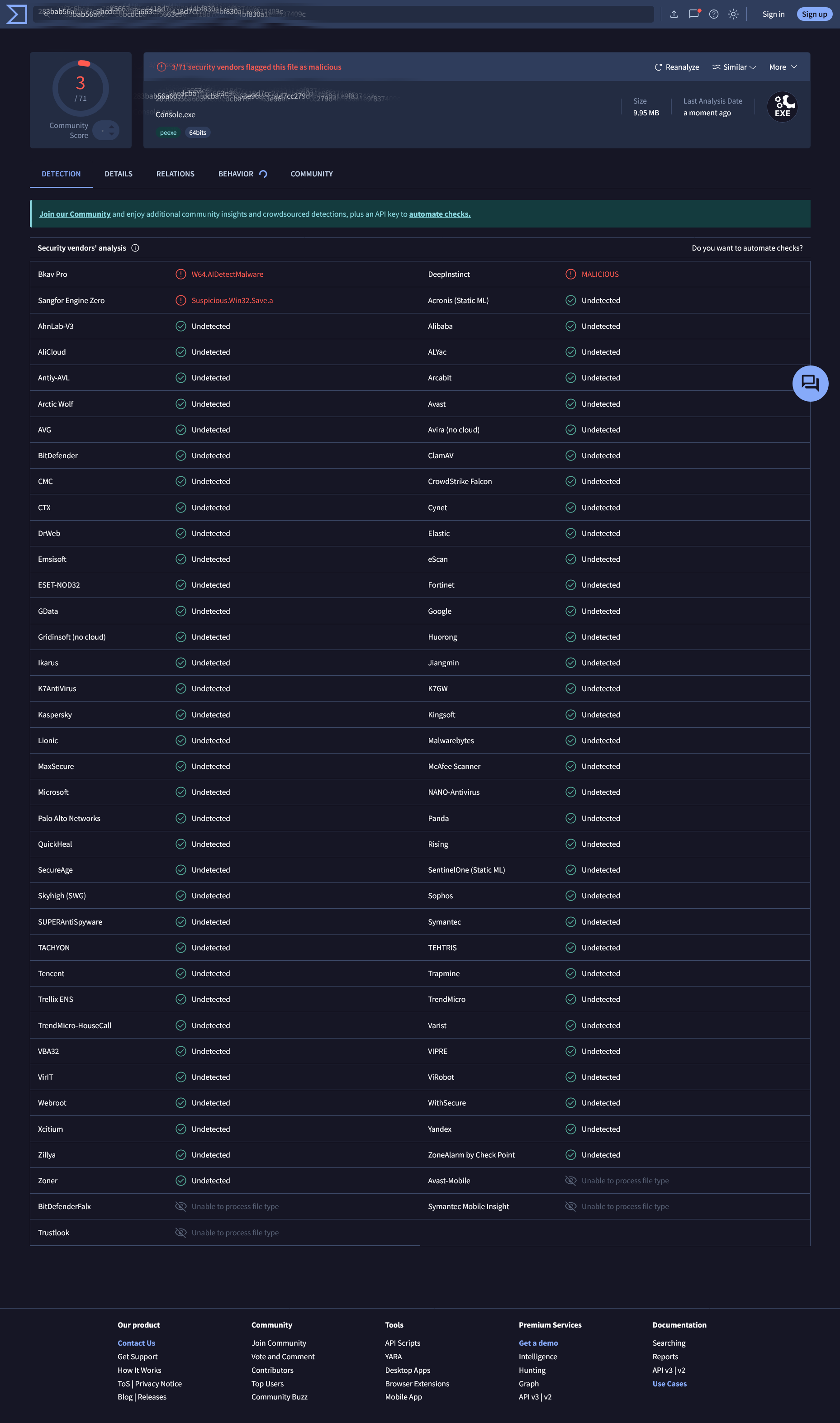

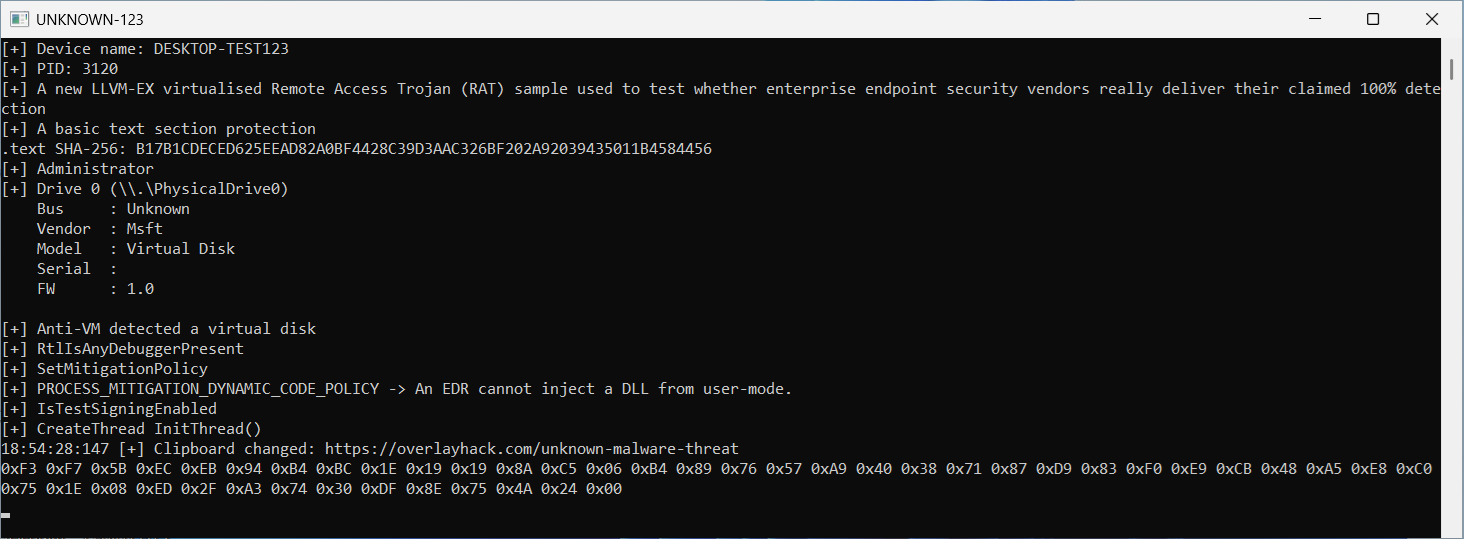

LLVM-EX virtualized sandbox aware malwareUsing a tool like llvm-msvc-ex to virtualize your malware can significantly increase the complexity of reverse engineering or modifying the code. This is because virtualization techniques, such as control flow flattening, bogus control flow, and VM-based obfuscation, make the malware harder to analyze and understand. These techniques can deter attackers or researchers from tampering with or reverse engineering the malware. With the right LLVM obfuscation passes, a C/C++ malware sample automatically becomes a unique version that pretty much evades all malware analysis sandboxes. Reversing an LLVM‑virtualized piece of malware (especially reversing the C2 communication) requires significant expertise, time, and money. It demands a highly skilled professional team. As a loader-type malware, each version is automatically unique (string encryption and so on). Static detection therefore lags far behind, and based on my initial tests, this got through of all enterprise solutions — even those that proudly advertise themselves as so‑called leading solutions. Likely renders even a sophisticated sandbox largely ineffective, ultimately forcing a dynamic analysis.

Note that this LLVM‑EX version has been modified so that it can virtualize only the functions you choose. Because if we're talking about a P2C, you obviously can't obfuscate the entire executable for reasons that should be quite self‑evident. Sandbox environments static analysis may interpret this as a polymorphic malware. 1-3/72 security vendors flagged this file as malicious so I almost defeated every static analysis sandbox. So much for a 100% detection. I tested them all with an unknown threat. A sophisticated modularized info-stealing malware written in C/C++.

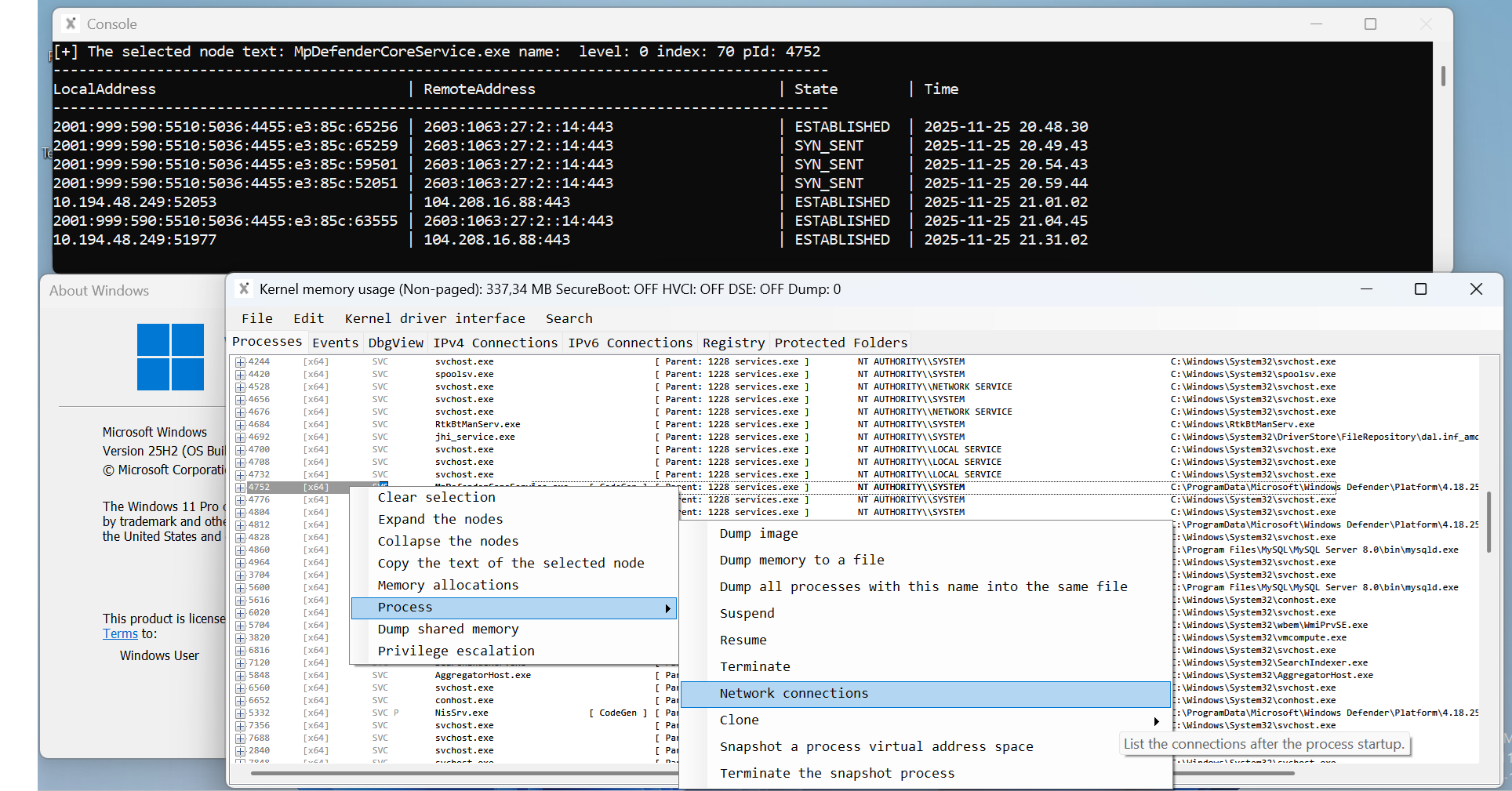

Those famously unbiased, next‑gen XDR/NGAV solutions that never miss a thingHow can you do threat hunting for new, unknown threats with such limited or non-existent process and command‑line data — not to mention the missing process network connections? You can't easily determine the root cause of a breach at the process or device level if the device user or SOC doesn't have unrestricted access to this crucial information. Or can you? If you've read even a single analysis of a somewhat sophisticated ransomware strain, you'll know that even today's basic ramsonware variants wipe all logs and make forensic analysis on the disk extremely difficult, if not nearly impossible.

Full size

An endpoint security solution that doesn't even inform the user at a basic level about possible malware persistence has received full scores/awards in these tests. The site refers to an award‑winning solution. My intention was also to highlight the misleading product marketing used on these sites. This is highly questionable behavior and amounts to distorting fair competition. Customers end up purchasing a product that does not match the description advertised on the website. A malware analyst from the same company — or another one — writes an article about the malware's persistence. But why doesn't their own solution even notify the user that a process added persistence? Or maybe they sacrificed features for the sake of stability, leaving the product mostly with static detection.

Some endpoint security solutions advertise a 100% detection rate. Makes me wonder if there's some bias in these tests, since none of them answered my question about whether they have ever tested with previously unpublished malware that has no static detection. Whenever someone releases something for free, it undermines the competition. And then when you try to build something commercial around the same idea, the first thing people ask is: what makes yours better, and why should anyone pay for it — especially when Windows Defender is free. With investors, even garbage can turn into a scam.

An endpoint security solution that does not even alert the user about behavior that is clearly characteristic of malware, not even at the most basic level. This information has been publicly available for at least a decade, long before generative AI existed. So how is it possible that such a product has received perfect scores in every test?

Free anti-virus isn't actually free; the collected data covers the development costs — at least as far as I understand. Full scores have been achieved in several tests, and the product has become award‑winning. These tests are the basis on which people buy these products.

There is no solution on the market that achieves 100% detection. It is also important to note the misleading marketing claims found on various vendor websites, where long market presence is implied to equal superior protection. Market research shows that many, if not most, vendors sell multiple product versions under different names, even though the actual technical differences between them are often purely cosmetic. The screenshot below, captured during my unbiased testing, supports my claims.

The detections are primarily caused by a classic false positive caused by virtualization/obfuscation.

By reading the /r/Malware subreddit. It becomes clear that new malware and their variants emerges daily. An anti‑virus solution that relies solely on static signature‑based detection is a thing of the past. And honestly, if an endpoint protection product can't even alert the user when a program exhibits behavior typical of malware, that should raise serious concerns. What other approach besides kernel‑level allow‑listing based endpoint protection can realistically stay ahead of these threats? Solutions like HEX DEREF ANTI‑MALWARE X demonstrate why deeper, kernel‑integrated defenses matter.

An LLVM‑virtualized piece of malware can bypass virtually all static detection mechanisms and turn analysis into an excessively time‑consuming process (as shown above). Although competing vendors may publish articles about their malware‑analysis capabilities, my preliminary testing suggests that many of the issues highlighted in those write‑ups remain unaddressed in the actual product implementations. It reminds me of a situation where a professional team was hired to analyze malware but chose not to consult the developers responsible for the kernel‑level sensor — a rather puzzling decision.

When you look at the industry's marketing, certifications, and the flawless scores handed out by testing labs, it's easy to believe that today's security products are fully prepared for real‑world threats. But the reality is far less reassuring. When an actual, modern cyberattack strikes, all those polished numbers and promises evaporate in seconds. That's the moment when the thinness of the protective layer becomes painfully obvious — and it happens only after the damage is already done. Organizations discover too late that the tests never reflected real adversaries, and the marketing never told the whole story. A cyberattack doesn't wait for anyone to update reports or adjust scoring criteria. It exposes what works and what doesn't, and it does so only after the consequences are already irreversible.

You've positioned yourselves as some sort of authoritative entity — so isn't this exactly the kind of thing that borders on knowingly misleading investors? Not to mention undermining fair competition.

When I applied for these positions (for example, a Windows kernel sensor developer. This already requires highly specialized expertise), I attached a clear proof‑of‑concept video to my application — which, in most cases, was exactly what the job posting was asking for. I got the impression that these recruiters don't even conceptually understand how malware or endpoint protection works, and therefore have no real idea who they should be hiring.

In most cases I didn't receive even a basic reply. It made me wonder whether they're just using fake job postings to collect as much information for free as possible.

The skill set presented in this article doesn't seem to meet the expectations of these recruiters. The videos attached to the article were produced with the dedication of a single person over a long period of time, yet they still weren't good enough for those who only crave shallow, trivial content. Your product development simply can't keep up with the competition when you refuse to hire the right talent.

The recruitment process somehow seems to support the idea that sensitive data is being collected without permission under the pretext of various features — unless the entire solution has been deliberately designed for spying on the user in this case, the endpoint security solution ends up turning against the very company it was originally meant to protect.

Kernel‑level malware - Capable of hiding a network connection at the process level (Windows 10 22H2 - Windows 11 25H2)

- Block process network queries entirely, or only for a specific process PID

Hiding a process's network activity from other applications. Hide connections belonging to a specific browser or VPN process. This is a unique C/C++ user‑mode (UM) / (KM) Windows kernel‑driver implementation. This allows you to limit which user processes may request network connections. The kernel‑level equivalent of netstat -ano. It even works in a manually mapped driver. This isn't about hiding from the user — it's about hiding from malware. Even malware running with only standard user privileges can map internal network addresses and the processes that connect to them. And since the kernel‑level attack surface has been overlooked for years, the most severe ransomware attacks can still succeed. And now, with that same capability, it is also possible to hide the network activity of a RAT‑type malware including C2-communication.

Network connection hiding – Hide network connections from tools like netstat, and also from any other user‑mode processes that query connection information through the NSI layer. As a malware‑analysis feature, this is a useful capability. You can immediately see all user processes that are querying other processes network connections. It was a blind spot — a quiet for at least a decade, undocumented subsystem that malware could hook without triggering PatchGuard.

Isn't it strange that something like this still slips past of these endpoint security products, and yet the testers keep handing out perfect scores in every single test.

https://securelist.com/daemon-tools-backdoor/119654/This is precisely why HEX DEREF ANTI‑MALWARE X logs all process network connections into its database without restrictions, and why home users have the option to disable this feature — the solution respects user privacy, unlike all the others.

Does the reader now understand what this is about? Shouldn't the privacy statement clearly mention that it logs all process network connections? Think about a person who works remotely and connects from a home device to the company network. If it's, for example, remote support, the cybersecurity software on that device ‘sees' which company network process X connected to. In a wartime situation at the latest, the data that has been collected may start being used for cyberattacks.

This kind of thing has been running around undetected on both personal and corporate devices for, what, about a decade now on users devices without them having the slightest clue.

I published this project for several reasons. One of the reasons is to support my job applications. Secondly, something like this is needed for testing the logic of an endpoint security solution — and of course for unbiased XDR/NGAV endpoint security product testing. That is why the test project's UNKNOWN-123 source code is available for purchase as a software work for educational and informational purposes only — so you can literally see for yourselves that you have been sold nothing more than pseudo‑cybersecurity for years. Because NIS2, DORA, and GDPR require organizations to regularly test their cybersecurity posture, the author published this article using an advanced Red Team test malware that simulates real‑world cyberattacks.

The source code is sold as software work for APT simulation, which mirrors a real cyberattack. None of the endpoint security vendors have any sample of this. That's why the project is fittingly named UNKNOWN‑123.

As a byproduct of developing an anti-malware solution, I have a kernel-level anti-cheat system that detects more cheaters than EAC, BattlEye, and EAAC combined

and is almost production-ready.

This could be offered directly to any game developer on Windows as a standalone anti-cheat implemented as software work.

My solution literally forces cheaters down to user mode, and it is almost impossible to bypass.

This is a unique implementation (all kernel‑level code has already been tested for stability) that can identify cheaters in both user space and kernel space. Even a single cheater is detected during the very first game round.

It delivers a clear competitive edge over XIGNCODE3 with its effortless integration, and most importantly, my implementation guarantees zero false bans. This makes it a superior choice for any game studio seeking reliable, next-generation protection.

Even a smaller software company can instantly outperform major industry anti-cheat vendors by using my concept and the fully functional HVCI-compatible kernel driver implementation, which passed the Microsoft kernel driver approval process without a single issue. This gives you a level of credibility, stability, and protection that most established vendors still struggle to achieve.

What I mean is the zero‑trust model in my anti‑malware, where strict allowlisting is enforced. Applied to a league anti‑cheat like FaceIt, this would be a highly competitive approach. With this model, process inspection would no longer be necessary. The author of the article is the lead developer of this endpoint security solution: https://hexderef.com/UNIT-123-PART-1/UNIT-123-PART-1_player.html

These providers started developing kernel‑level anti‑cheat systems without understanding the core issue or the underlying reason why cheating continues in the games protected by these solutions despite their years of effort. To backup my skill. I've also developed an advanced bypass: https://overlayhack.com/eac-eaac-anti-cheat-bypass/1038

Recognizing the problem: An HWID‑locked P2C loads into the kernel before the anti‑cheat, it gains the ability to run sensitive or potentially dangerous operations unchecked. If a cheater reaches the kernel before the anti‑cheat, cheating will continue in every game it's supposed to protect. But the author claims to have a real, complete fix for the problem. I can demonstrate at any time that cheating stops just as abruptly as driving a car into a wall at 120 km/h — the driving ends instantly.

First of all, my kernel-level implementation creates an almost impenetrable barrier, forcing any cheat to operate strictly in user mode. This is extremely difficult to bypass, even for a seasoned professional. As the lead developer of an anti-malware I know exactly what I am talking about.

I have developed a unique solution that forces the attacker back to user mode, effectively blocking the most critical kernel-level attack vector. If someone were somehow able to bypass this mechanism, my second detection method will still detect the cheater. As far as I know, no other kernel-level anti-cheat currently implements these techniques. My implementation needs virtually no maintenance. Even if a cheater reaches the kernel before the anti‑cheat initializes, it still exposes the cheater's process PID, no matter how they got into the kernel. Voyager‑type approaches won't help them anymore. For P2C providers, this is game over.

The concept, as well as the kernel‑level driver code I have validated for long‑term stability, has been ready for years. The concept and implementation details are protected under an NDA. I can provide a conceptual overview for a 14,999 USD BTC upfront payment, once the agreement is acknowledged from the CEO@gamestudio.com email address. This information and any associated source code shall not be disclosed, distributed, or otherwise made available to any third party under any circumstances.

The total price for this project is 49,999 USD in BTC for a non‑exclusive license to the Windows kernel driver source code. The price includes the C++ source code for the service (SVC) process as well as the required LLVM-EX virtualization, which makes reversing the UM-KM communication as difficult and time‑consuming as possible. I recommend purchasing the full package with source code, as the implementation is quite straightforward. With any modern P2C, I can demonstrate that even one cheater is detected during a single game session while my solution is active.

If the concept or fully working kernel‑level code I demonstrate in the video below were to leak to the providers mentioned above, every P2C vendor would literally go bankrupt — or they would have to knowingly sell an exit scam. Likewise, any game studio using these solutions could immediately develop its own alternative without needing to rely on these so‑called mainstream providers anymore.

- Perfect integration with the anti‑cheat, because it includes maintainable HVCI‑compatible kernel driver source code

- Players regain trust in the developer, as no sensitive data is shared with third parties anymore

- Even a single cheater is detected during the very first game round with this

- Detects the cheat software from process memory as well as from kernel memory

- Easy to integrate with the game and requires very little maintenance

- No false bans

- Runs alongside every anti-virus vendor

As long as a cheater can access the kernel before or after the anti‑cheat, cheating in games will continue almost as before. I need to point out that what has been sold to you as developers is essentially pseudo kernel-level anti-cheat. The situation changes fundamentally if you adopt my implementation.

Now that fair play has become practically almost impossible in these games, many players who previously played legitimately end up purchasing P2C services, which only worsens the problem.

An unidentified malware threat could just as easily be the kernel‑level anti‑cheat. Has anyone thoroughly audited these solutions? When relying on a third‑party anti‑cheat, where are the assurances that any collected data will not be misused?

BattlEye - The Anti-Cheat Gold Standard. It's remarkable. I reached out to them multiple times through their website’s contact form over the past couple of years without receiving any response. They present themselves as an anti‑cheat standard, yet they decline to evaluate a functional solution that could effectively address cheating across games. The email listed on their website wasn't even answered, even though every game supposedly protected by their anti‑cheat is basically a cheaters festival.

The player numbers practically advertise themselves. If players see that the anti‑cheat outperforms the competition, the company can be almost certain that its other products will benefit too.

https://steamdb.info/tech/AntiCheat/BattlEye/

https://steamdb.info/tech/AntiCheat/EasyAntiCheat/

For reference, EAC operates at a scale of roughly $10,000,000 USD per year. They have an office in Finland, so I was able to verify their revenue through our national business registry.

I only need to port these existing components from my anti‑malware driver into a separate anti‑cheat driver, and in addition we only need a relatively simple SVC process. With roughly three months of work — provided someone covers the 14,999 USD upfront payment — we can deliver a significantly better competing solution in a very short time and in a highly cost‑effective way. So if you, for example, have a software company and a valid EV code‑signing certificate, feel free to get in touch.

The funniest part is that the Anti‑VM feature can be used against P2C providers who try to avoid game bans. My implementation is refined enough that even buying a new device won’t help them. I have kernel‑level code that can detect a virtual machine with near certainty. And if you spend even a little time reading the forums, you’ll see that cheats are being sold for every EAC‑protected game as well — people go in and rage‑hack servers just for fun. All of this ends during the very first match when using the anti‑cheat developed by the author of the article.

FeaturesCoded in C/C++. It operates and performs actions depending on whether it is running as a regular user or with administrator privileges. The settings are configured in the Config.h file, meaning it cannot be identified based on command‑line parameters. LLVM‑EX virtualization makes every build unique, so static detection does not work against it.

- Anti-VM

- Anti-Debug

- Anti-Inject

- Anti‑Static analysis

- IAT Hiding

- Clipboard DATA

- Disk serials (NVMe etc.)

Anti-VM: Sandbox aware malware can render even a sophisticated sandbox largely ineffective, ultimately forcing a manual analysis. If malware detects that it is running in a virtual machine, it may refrain from executing any malicious actions.

All of these features are commonly used in commercial software to provide even a basic level of copy protection.”. For example, using the disk's serial number allows you to bind the license to a specific device. And for obvious reasons, any function related to copy‑protection must of course be virtualized.

If you have a company and your own legitimate EV code‑signing certificate, send me an email from your company address if you're interested in some kind of collaboration. Please also include your Telegram in the email. Thank you.